Have Computers Made Us More Productive? A Puzzle

For many Americans, the proliferation of the personal computer has transformed the workplace more than any other innovation. Over the past 15 years or so, this transformation, sometimes called the "Information Revolution," has caused many firms to rethink their organizational structures and management procedures. The Information Revolution is also commonly credited with creating huge gains in workplace productivity, which, in turn, have led to higher wages.

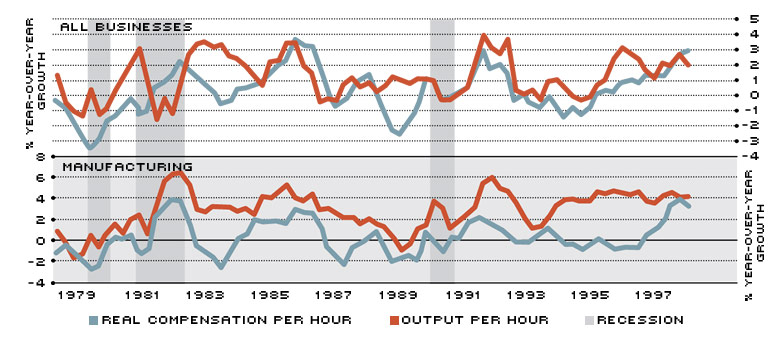

The catch, though, is that these huge gains in productivity have not shown up in the national data. Rather, year-over-year gains in overall productivity—measured as output per hour of all persons in the business sector—have failed to suggest that anything unique was occurring in the workplace during this business expansion relative to previous expansions (see top half of chart). In 1996 and 1997, for example, output per hour increased about 2 percent a year; in 1995, it declined 0.1 percent. In fact, since the end of the 1990-91 recession, productivity growth has so far peaked at 3.3 percent in 1992. After the 1981-82 recession, productivity growth peaked at 3.2 percent in 1983. In the 1950s and '60s, in contrast, growth rates above 4 percent were quite common.

The Missing Pieces

The absence of huge productivity gains has created what economists call the productivity paradox. Basically, the paradox is that the official statistics have not borne out the productivity improvements expected from new technology. The United States is not unique in this respect. As economists Erwin Diewert and Kevin Fox have observed, there has been a "measured productivity slow-down in industrialized countries in the last 25 years, the very time when we would have expected to see large increases in productivity growth due to rapid technological change." 1 So, what happened?

Part of the explanation is that there have been payoffs to firms from computer investment, but these payoffs are hard to measure. For example, Zvi Griliches has argued that in measuring productivity, "[computer] investment has gone into our 'unmeasurable sectors,' and thus its productivity effects, which are likely to be quite real, are largely invisible in the data." 2 A different argument, advanced by Paul David, compares the onset of computers with the advent of electricity, which took about 40 years before its impact on productivity was observed. 3 Jack Triplett counters that the rapid fall in the price of computing power indicates a different diffusion process for computers than for electrification, making the analogy weak. He writes: "In the computer diffusion process, the initial applications supplanted older technologies for computing. Water and steam power long survived the introduction of electricity; but old pre-computer age devices for doing calculations disappeared long ago." 4

Another explanation for the paradox—put forth by David Romer in 1988—is that, because investment's share of GDP is relatively small, large changes in investment translate into only small changes in output. And since computers represent a modest part of total investment, huge increases in computer investment result in only meager increases in measured output and, hence, measured productivity. Diewert and Fox believe this analysis doesn't work for computers because they are inherently different from other types of capital. They write: "[Computers] can be used to control other capital (and labor), so that the other capital (and labor) is used more efficiently, for example, the management of a warehouse, or coordinating the movement of trucks and airplanes." 5 In other words, computers may actually substitute for other capital—including human capital—thereby replacing, rather than adding to, some of the productivity gains.

Is This Where It's Been Hiding?

The productivity paradox has not affected all sectors of the economy, though. U.S. manufacturing, for instance, has experienced relatively strong annual productivity growth over the past few years (see bottom half of chart). In fact, output per hour in this sector has grown more than 4 percent a year since 1995—a sustained rate of increase unequaled since the end of World War II. Perhaps, then, the expected productivity gains are more isolated than anticipated, occurring mostly in those sectors that are extremely capital intensive, like manufacturing.

What hasn't accompanied this relatively strong growth in manufacturing productivity, however, is a commensurate increase in real wages. While output per hour has been growing at more than 4 percent a year, real compensation per hour at manufacturing firms has been growing at less than 1 percent a year. It wasn't until the beginning of 1998 that year-over-year growth in real compensation per hour spiked up to almost 4 percent.

This leads to yet another conundrum, since traditional labor market theory predicts that productivity gains should drive wage increases. Why? Because theory says workers should receive a wage that exactly compensates them for their added value to total output, otherwise known as their marginal revenue product. This is calculated by determining how much output workers can produce in an hour—their marginal product—and then figuring out how much extra revenue that output will bring the firm—its marginal revenue—hence the term, marginal revenue product. The chain of events, then, would be: investment in computers leads to increases in output per hour (higher productivity), which, in turn, leads to higher wages. For the U.S. manufacturing sector, the chain appears to be holding, although the last link seems weaker than the first. Perhaps, then, investment in computers and information technology has had other, not as easily observed, outcomes.

Hidden Consequences

This is exactly the proposition that David Autor, Lawrence Katz and Alan Krueger examined. In their 1997 article, they argued that the rapid spread of computer technology in the workplace may explain as much as 30 to 50 percent of the increase in the growth rate of demand for more-skilled workers since 1970. The three economists found that the demand for college-level workers grew more rapidly on average from 1970 to 1995 than from 1940 to 1970. This increased demand was initially met with a sufficient supply of college-educated workers. That supply slowed at the beginning of the 1980s, however, eventually causing a shortage that led to a widening of the wage gap between those with and without college degrees.

An even more striking finding by the authors was that industries displaying the largest increases in skill requirements—legal services, advertising and public administration, for example—were the biggest users of computers. 6 Relative to other industries, these have exhibited greater growth in employee computer use and more capital investment in computers both per worker and as a share of total investment. In addition, these high computer-use sectors appear to have reorganized their workplaces in a manner that disproportionately employs more educated—and higher paid—workers.

But simply reorganizing the workplace to accommodate higher-skilled workers isn't the end of the story. How firms reorganize is also important. In a 1997 article, Sandra Black and Lisa Lynch asserted that manufacturing firms that gave workers a significant decision-making role were markedly more productive than firms that did not. Black and Lynch also found that productivity was higher in plants in which a high proportion of nonmanagerial workers used computers, and in plants where workers had high average levels of education. The authors also noted, however, that not all restructuring plans are equal—for example, neither profit-sharing plans designed exclusively for managers nor total quality management programs did anything to improve plant productivity. Profit-sharing plans that included all workers, though, did improve productivity. Black and Lynch's bottom line, then, is that practices that encourage workers to think and interact to improve the production process are strongly linked to increased productivity.

All told, the recent research offers various explanations that support the belief that productivity has been increasing because of computer investment, despite what the data show. The Information Revolution has forced many firms to reconsider their production processes more carefully, often resulting in reorganization. It has also altered their demands for labor, requiring them to recruit better-educated workers than they had previously. Meanwhile, researchers are striving to prove that, upon closer examination, the productivity paradox is not a paradox at all, but, instead, a puzzle in which firms are putting the pieces together faster than the official statistics can.

Endnotes

- See Diewert and Fox (1998). Like Diewert and Fox, all of the people cited in this article are economists. [back to text]

- See Griliches (1994). [back to text]

- See David (1990). [back to text]

- See Triplett (forthcoming). [back to text]

- See Diewert and Fox (1998). [back to text]

- See Appendix Table A2 in Autor, Katz and Krueger (1997) for a list of industries and the authors' measures of skill requirement and computer investment. [back to text]

References

Autor, David H., Lawrence F. Katz, and Alan B. Krueger. "Computing Inequality: Have Computers Changed the Labor Market?" NBER Working Paper 5956 (March 1997).

Black, Sandra E., and Lisa M. Lynch. "How to Compete: The Impact of Workplace Practices and Information Technology on Productivity," NBER Working Paper 6120 (August 1997).

David, Paul A. "The Dynamo and the Computer: An Historical Perspective on the Modern Productivity Paradox," American Economic Review (May 1990), pp. 355-61.

Diewert, W. E., and Kevin J. Fox. "The Productivity Paradox and Mismeasurement of Economic Activity," paper presented at the Eighth International Conference: Monetary Policy in a World of Knowledge-based Growth, Quality Change, and Uncertain Measurement, Bank of Japan, Tokyo (May 1998).

Griliches, Zvi. "Productivity, R&D, and the Data Constraint," American Economic Review (March 1994), pp. 1-23.

Romer, David. "The Productivity Slowdown, Measurement Issues, and the Explosion of Computer Power: Comment," Brookings Papers on Economic Activity, Vol. 2 (1988), pp. 425-28.

Triplett, Jack E. "The Solow Productivity Paradox: What Do Computers Do to Productivity?" Canadian Journal of Economics (forthcoming).

Views expressed in Regional Economist are not necessarily those of the St. Louis Fed or Federal Reserve System.

For the latest insights from our economists and other St. Louis Fed experts, visit On the Economy and subscribe.

Email Us